Flow matching (FM) is a method that draws -dimensional (

) samples from any (data) distribution

.

FM “transforms” noise to data by means of an ordinary differential equation (ODE). The general FM procedure goes like this:

- i) Choose an initial (noise) distribution

- ii) Choose the so-called marginal vector field

for all

- iii) Let

evolve from

to

according to the ordinary differential equation (ODE):

- iv) The final point

is distributed as

.

We will show which choice of the marginal vector field ensures that item iv) is satisfied, i.e., FM manages to transform noise to data with the correct distribution.

(Notation remarks: Random variables are denoted in capital letters (), while their values are denoted in lowercase (

). Generic probability density functions are denoted as

.)

1. A first attempt

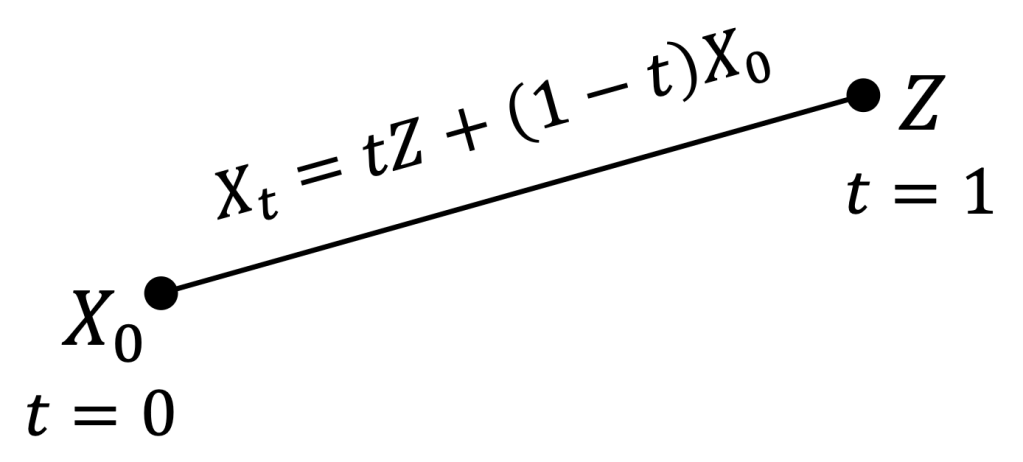

The simplest idea that comes to mind is to transform noise to data via straight lines connecting noise and data points, and hope that there exists an associated ODE that does the same thing. In other words,

- 1) Define a so-called coupling distribution

between initial noise

and data

, subject only to the constraint that its marginals are the noise and data distributions:

- 2) Draw random noisy and data pairs

.

- 3) Connect each

with a straight line

, defined for all

.

- 4) For each point

and time

, find the (single!) pair of points

such that the straight line between them passes through

at time

, i.e.,

.

- 5) Define

.

In this way, when the initial point is and

is reached, after an infinitesimal time

, the point

is along the line connecting

and

. Thus,

at the final time

.

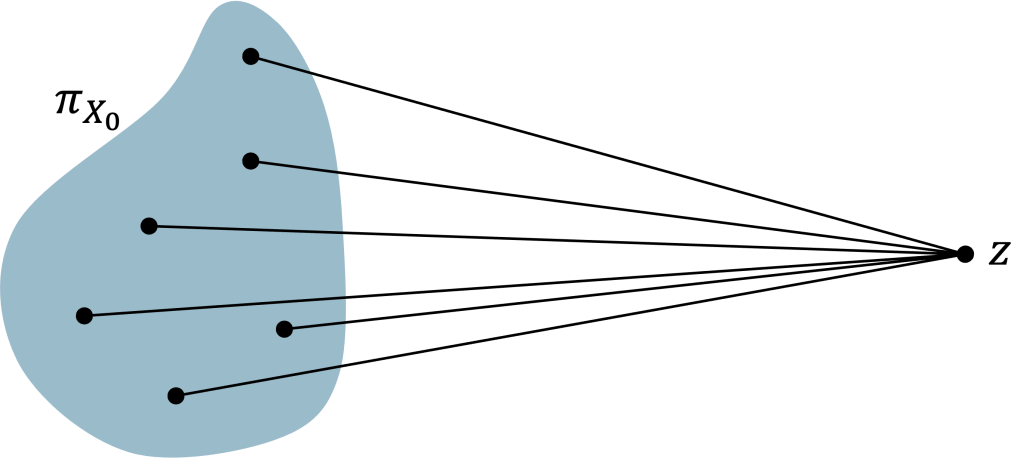

2. When things go well: Data distribution is a Dirac delta

In the special case where the data distribution is a Dirac delta, i.e., , the pair

found in step 4) is unique and the procedure above is well-defined.

In this case, the evolution of is governed by the ODE

where is the so-called conditional vector field.

Clearly, at the final time

, i.e.,

. So, this simple model manages to transform noise to data with the correct distribution.

(Note: in (4) we rewrote as a function of the current point

because the vector field should not depend on the initial condition.)

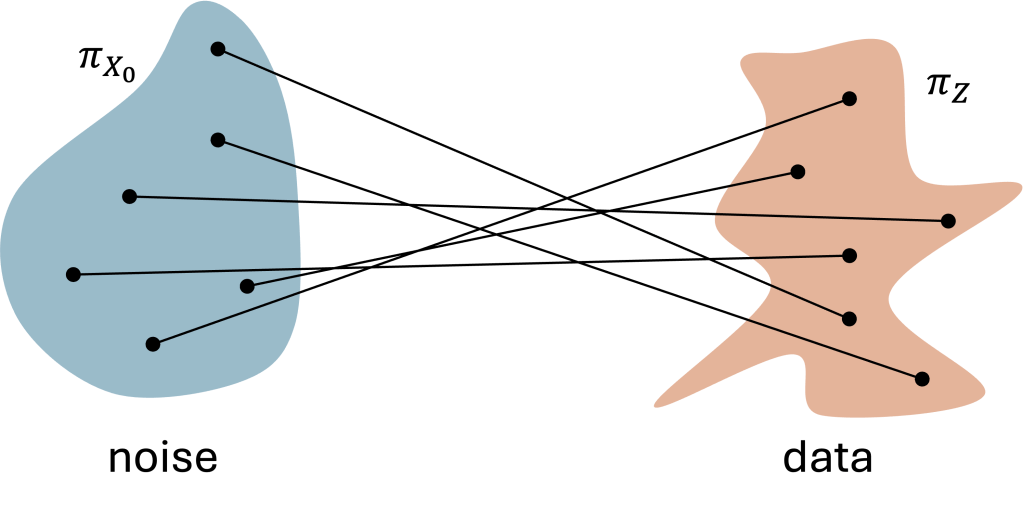

3. When things go wrong and how to fix them

For generic data distributions our reasoning has an important flaw at step 4): the line may not be unique! In fact, there may be infinitely many pairs

that satisfy the condition

.

How to define in this case? (Note that

must be a function only of the current state

and time

, not of final and end points! In other words, the ODE evolution has a “local” view of the system, and cannot predict the future nor trace back to the past.)

It turns out that the fix is straightforward: it suffices to average the directions over all the possible pairs

that satisfy the condition

. More formally,

Equivalently, we can define as the expected value of the conditional vector field

over the data distribution, conditioned on the fact that

:

4. Flow matching reaches its goal

In this final technical section, we prove that FM reaches its goal, i.e., the final point of the ODE (1) with vector field

defined as in (5) or, equivalently, (6) is distributed as the data distribution

.

Actually, we will prove a slightly more general result: the ODE solution is distributed exactly as the random variable

, although their realizations are generated differently. Since

, this achieves our goal.

Our main technical tool is the so-called continuity equation, claiming that if the generic probability density function satisfies the following equation:

then , obtained via the ODE (1) with vector field

, is indeed distributed as

.

(Note: is the divergence operator, i.e.,

for any vector field

.)

Then, we will show that satisfies the continuity equation (7).

where in (10) we applied the continuity equation to the conditional vector field . Therefore,

is indeed distributed as

and FM reaches its goal!

5. Bonus: Equivalence of the two definitions of

For those who want to check the details, we show here that the definitions (5) and (6) of are equivalent.

which equals the definition (6) via Bayes’ theorem.

Leave a comment